Building a Robust Machine Learning Pipeline with ZenML

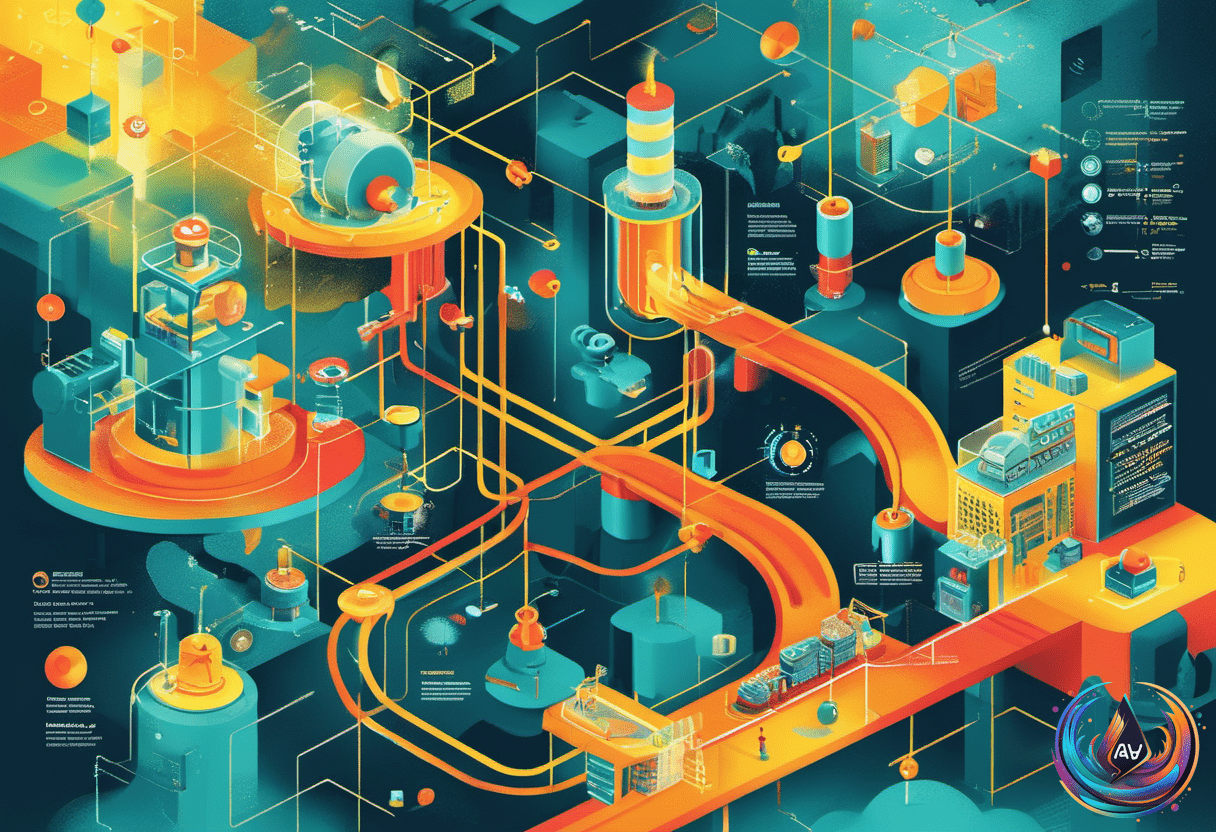

Creating a reliable machine learning pipeline can seem complex, but with the right tools, it becomes much simpler. ZenML is a framework that helps you build, track, and manage end-to-end ML workflows. This guide walks through setting up a production-grade pipeline, including custom components, metadata tracking, and hyperparameter tuning.

Setting Up the Environment and Initializing ZenML

The first step is preparing your environment. This involves installing necessary libraries like ZenML, scikit-learn, pandas, and pyarrow. Once installed, you initialize a new ZenML project, which creates a workspace to manage your pipeline components. Creating a clean working directory helps keep everything organized, and setting environment variables ensures logging and analytics are controlled as per your preferences.

With the environment ready, you bootstrap the ZenML repository. This process sets up the infrastructure needed for your pipeline to track artifacts, manage metadata, and ensure reproducibility. From here, you can start defining your data processing and modeling steps, knowing everything is properly managed within ZenML’s ecosystem.

Building the Data and Model Components

The core of the pipeline involves data loading, preprocessing, and model training. Using Python, you load a dataset, such as breast cancer data, and split it into training and testing sets. To handle domain-specific data, a custom materializer is created. This component serializes complex dataset objects and extracts metadata automatically, making data management more seamless.

Next, you define modular pipeline steps for data transformation, model training, and evaluation. These steps are connected in a way that allows for easy adjustments and testing. The custom materializer enhances transparency by logging detailed metadata for each dataset version, which is crucial for tracking changes and reproducing results later.

Implementing Hyperparameter Search and Model Selection

To improve model performance, the pipeline performs a hyperparameter search across multiple algorithms. This involves training several models with different configurations in parallel, a process known as fan-out. Each candidate model is evaluated on metrics like accuracy and ROC AUC, and key metadata is logged at each step.

The next phase uses a fan-in strategy, where the best model based on evaluation metrics is selected. This model is then promoted as the final candidate. Throughout this process, ZenML’s model control plane and artifact tracking keep everything transparent and reproducible. Caching mechanisms speed up repeated runs, saving time and computational resources.

Overall, building a production-ready machine learning pipeline with ZenML combines modular design, detailed metadata tracking, and automated optimization. This approach ensures your models are not only effective but also easy to manage and reproduce over time.

What do you think?

It is nice to know your opinion. Leave a comment.