Introducing AntAngelMed: A Large Open-Source Medical AI Model

Researchers from China have developed a new open-source medical language model called AntAngelMed. This model is designed to handle complex medical queries with impressive efficiency and size. It stands out because of its unique architecture, which allows it to be both powerful and resource-friendly.

What Makes AntAngelMed Special

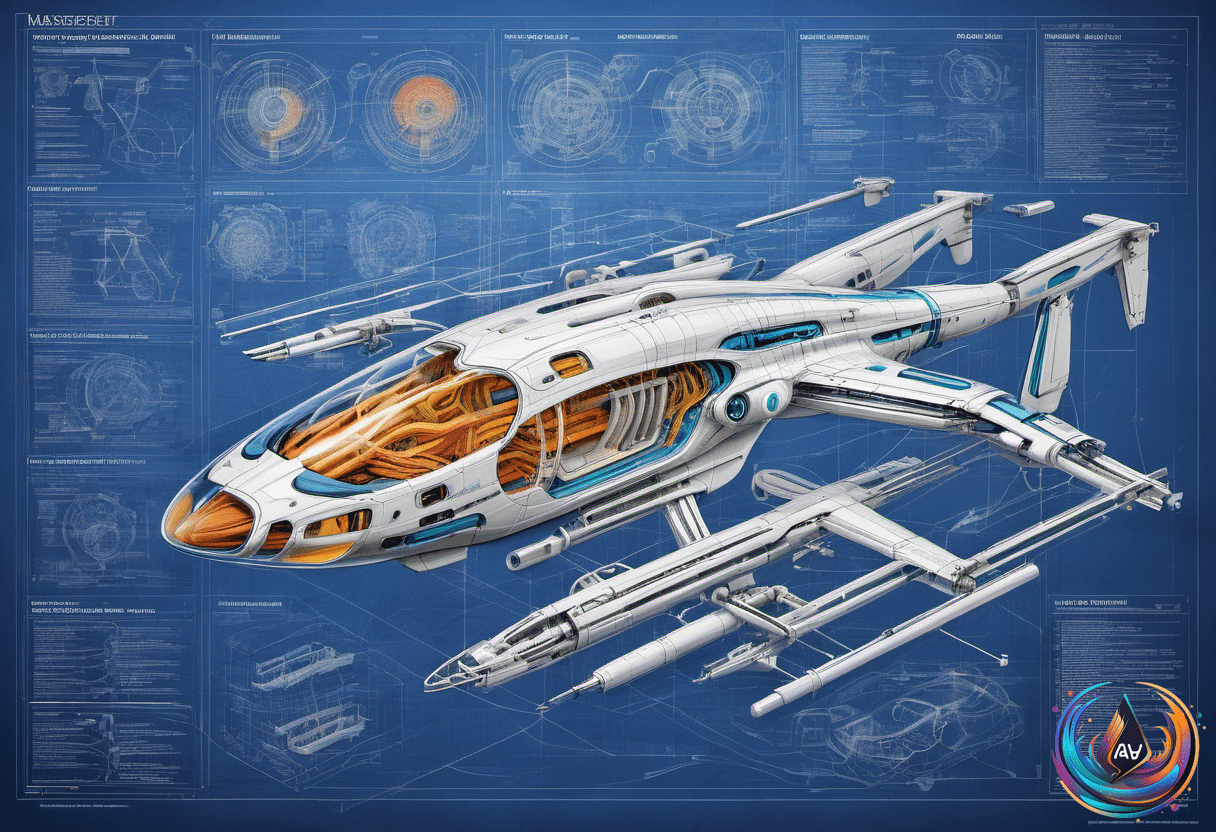

AntAngelMed has a whopping 103 billion parameters, but it doesn’t activate all of them at once. Instead, it uses a mixture-of-experts (MoE) architecture with a 1/32 activation ratio. This means only about 6.1 billion parameters are active during processing. This approach helps the model deliver high performance without requiring massive computing power.

MoE models split into many smaller ‘expert’ sub-networks. A routing system decides which experts to use for each query, making the process more efficient. Thanks to this design, AntAngelMed can perform as well as much larger dense models, which activate all parameters. It can also handle longer inputs more quickly, making it ideal for medical documents and multi-turn conversations.

How the Model Was Trained

The training process for AntAngelMed involves three main stages. First, it undergoes continual pre-training on large medical texts, including encyclopedias, web data, and academic papers. This gives the model a strong general reasoning ability before focusing on medical specifics.

Next, it goes through supervised fine-tuning on a diverse dataset that includes both general reasoning tasks and medical scenarios. This helps the model understand complex questions, diagnostic reasoning, and ethical considerations in medicine. Finally, reinforcement learning refines its behavior, emphasizing empathy, safety, and accuracy, reducing mistakes or hallucinations in medical responses.

Performance and Practical Uses

On hardware optimized for AI, AntAngelMed can process over 200 tokens per second, which is about three times faster than comparable large dense models. It also supports a very long context length of 128K tokens, perfect for analyzing lengthy medical records or detailed patient histories.

The team has also released a quantized version that runs even more efficiently. It uses special techniques to boost throughput further, making it suitable for real-time medical applications. Benchmarks show it outperforms many existing models in evaluating medical knowledge, safety, and reasoning tasks across multiple datasets.

Overall, AntAngelMed is a significant step forward in open-source medical AI. Its architecture and training process make it a powerful tool for medical research, clinical decision support, and healthcare automation, all while being more efficient than traditional models.

What do you think?

It is nice to know your opinion. Leave a comment.